In the universe of programming languages there is a rising staR. It is moving fasteR and getting biggeR and brighteR!

Ok, you get the hint! It is the language R or the R Language.

R language is the successor to the language S. R is extremely powerful for statistical computing and processing. It is an interpreted language much like Python, Perl. The power of the language R comes from the 4000+ software packages that make the R language almost indispensable for any type of statistical computing.

As I mentioned above in my opinion, R, is soon going to play a central role in the technological world. In today’s world we are flooded with data from all sides. To make sense of this information overload we need techniques like Big Data, Analytics and machine learning to make sense of this data deluge. This is where R with its numerous packages that make short work of data becomes critical. The packages also have very interesting graphic packages to display the data in many forms for faster analysis and easier consumption.

The language R can easily ingest large sets of data in CSV format and perform many computations on them. R language is being used in machine learning, data mining, classification and clustering, text mining besides also being utilized in sentiment analysis from social networks.

The R language contains the usual programming constructs namely logical, loops, assignment etc. The language enables to easily assign values to vectors, matrices, arrays and perform all the associated operations on them.

The R Language can be installed from R-project. The R Language package comes with many datasets which are data collected from various sources. One such dataset is the Iris dataset. The Iris dataset is dataset about the Iris plant( Iris is a genus of 260–300[1][2] species of flowering plants with showy flowers).

The dataset contains 5 parameters

1) Sepal length 2) Sepal Width 3) Petal length 4) Petal width 5) Species

This dataset has been used in many research papers. R allows you to easily perform any sophisticated set of statistical operations on this data set. Included below are a sample set of operations you can perform on the Iris dataset or any dataset

> iris[1:5,]

Sepal.Length Sepal.Width Petal.Length Petal.Width Species

1 5.1 3.5 1.4 0.2 setosa

2 4.9 3.0 1.4 0.2 setosa

3 4.7 3.2 1.3 0.2 setosa

4 4.6 3.1 1.5 0.2 setosa

5 5.0 3.6 1.4 0.2 setosa

> summary(iris)

Sepal.Length Sepal.Width Petal.Length Petal.Width Species

Min. :4.300 Min. :2.000 Min. :1.000 Min. :0.100 setosa :50

1st Qu.:5.100 1st Qu.:2.800 1st Qu.:1.600 1st Qu.:0.300 versicolor:50

Median :5.800 Median :3.000 Median :4.350 Median :1.300 virginica :50

Mean :5.843 Mean :3.057 Mean :3.758 Mean :1.199

3rd Qu.:6.400 3rd Qu.:3.300 3rd Qu.:5.100 3rd Qu.:1.800

Max. :7.900 Max. :4.400 Max. :6.900 Max. :2.500

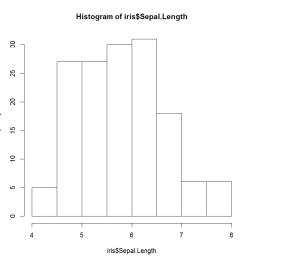

>hist(iris$Sepal.Length)

Here is a scatter plot of the Petal width, sepal length and sepal width

>scatterplot3d(iris$Petal.Width, iris$Sepal.Length, iris$Sepal.Width)

As can be seen R can really make short work of data with the numerous packages that come along with it. I have just skimmed the surface of R language.

I hope this has whetted your appetite. Do give R a spin!

Watch this space!

You may also like

1. Introducing cricketr! : An R package to analyze performances of cricketers

2. Literacy in India : A deepR dive.

3. Natural Language Processing: What would Shakespeare say?

4. Revisiting crimes against women in India

5. Sixer – R package cricketr’s new Shiny Avatar

Also see

1. Designing a Social Web Portal

2. Design principles of scalable, distributed systems

3. A Cloud Medley with IBM’s Bluemix, Cloudant and Node.js

4. Programming Zen and now – Some essential tips -2

5. Fun simulation of a Chain in Android

Checkout my book ‘Deep Learning from first principles Second Edition- In vectorized Python, R and Octave’. My book is available on Amazon as

Checkout my book ‘Deep Learning from first principles Second Edition- In vectorized Python, R and Octave’. My book is available on Amazon as